An implementation of the type inferer can be found on GitHub. We have also provided a playground that infers and checks FT programs.

The FT calculus

David Pearce's 2012 paper Sound and Complete Flow Typing with Unions, Intersections and Negations develops a subtyping operator for a simple FT (flow typing) calculus, consisting of the proper types

$\any$ is the top type, $\never$ is the bottom type, $\int$ is the type of integers, and $\left(\T_1, \ldots, \T_n\right)$ is a tuple type. $\lnot\T$ is a negation type, inhabited by all values in the universe not inhabited in $\T$. $\T_1 \land \ldots \land \T_n$ is an intersection type, inhabited by values of type $\T_1$ and $\T_2$ and $\ldots$ and $\T_n$. $\T_1 \lor \ldots \lor \T_n$ is a union type, inhabited by values of type $\T_1$ or $\T_2$ or $\ldots$ or $\T_n$.

The syntax of FT is defined on page 4 of Pearce's paper, and restated belowOur syntactic specification differs mildly from Pearce's in that we permit declarations with multiple formals and use a post-fix type annotation syntax.:

All types except $\never$ and $\lnot \T$ are typable by the user; the latter two are generated during flow typechecking. The flow typechecker narrows variable types at checked sites; the $\tt if\ x\ is\ \T$ construct above, for example, narrows $x$ to $\T$ in the $\tt then$ branch, and to "everything except $\T$", $\typeof\ x\ \land\ \lnot\T$, in the $\tt else$ branch. Another unique feature of the flow typechecker is that applications are checked by inlining argument types into a function body and then checking that body, rather than computing a universal return type once.

As a larger example, consider the FT program

First we check the declaration f, where x will be typed as $\int\lor(\int,\int)$ in the then branch, and $\any\land\lnot(\int\lor(\int,\int))$ in the

the else branch. When checking the application f 1, we

first check if $\typeof\ \mathtt{1}$ is a subtype of $\any$ (using a subtyping operator $\st$, described below), then check the body

of f with $x\co\typeof\ \mathtt{1}$ to determine the resulting type of the

application. At the conditional, we check if $\typeof\ x$ is a subtype of

$\int\lor(\int,\int)$. According to the result, we update the environment and check the appropriate branch.

Pearce's subtyping algorithm

The crucial contribution of Pearce's paper is the construction of a subtyping operator we will call $\st$, under which all of the following hold:

Designing $\st$ to be soundSoundness means no term is illegally subtyped: $\T_1\st\T_2\implies\forall x\co\T_1, x\co\T_2$. and completeCompleteness means the subtyping operator admits all subtypable terms: $\forall x\co\T_1, x\co\T_2\implies\T_1\st\T_2$. is not trivial, and Pearce's paper is a very well-written account of constructing a (semi-)practical$\st$ requires rewriting types to their Disjunctive Normal Form, which can require an exponential amount of time. This is catastrophic in the presence of large or nested tuples, whose DNF rewriting experiences a tremendous explosion in the number of steps. For example, our implementation has been unable to terminate normalization of $(((\int,\int)\land\lnot\int, (\int,\int)\land\lnot\int), ((\int,\int)\land\lnot\int, (\int,\int)\land\lnot\int))$ even after several minutes. Nevertheless, $\st$ is more than suitable for less pathological types. algorithm that is exactly so.

$\infer$: type inference for FT

In this cc, we will design a type inference algorithm $\infer$ that "fills in" omitted type annotations in an FT program. Rather than being a component of the typechecker, $\infer$ is an out-of-band tool that does its best to complete a FT program with annotations demanded by the syntax, which a developer may find cumbersome to write. Indeed, we will prove that $\infer$ is complete, being able to infer every typable FT program; sound, annotating only those programs that are typable; and minimal, always inferring the smallest legal type. In the most extreme case, like ML, $\infer$ can type a program with no annotations at all.

It is not necessary to understand the operation of $\st$However, we will again mention that Pearce's paper is a pleasant, worthwhile read. to understand our type inference algorithm, only to believe that

- $\st$ is sound and complete

- $\st$ induces a partial order over the universe of FT types

- the universe of FT types, partially ordered by $\st$, is a lattice

Preliminaries

$\Def$ Let $\FT$ be the set of all well-typed FT programs, adhering to the syntax and types stated by the definitions of $\T$ and $\tt t$.

$\Def$ Let $\T^\delta ::= ([\T\to\T^\delta]\T) ~+\!\!|~ \msf{infer}\ n $ denote a type shape that is either a type inference variable distinguished by an integer $n$, or a proper type form where references to proper types may be type shapes.

$\Def$ Let ${\tt t}^\delta ::= [t\to t^\delta, \T\rightarrow\T^\delta] {\tt t}$ denote the syntax of a FT term shape or program shape. In a ${\tt t}^\delta$, type annotations may be type shapes than proper typesIn practice, a parser can admit a term without type annotations entirely, inserting fresh inference variables as needed., and references to other terms maybe term shapes.

$\Def$ Let $\FTS$ be the set of all valid FT program shapes, adhering to the syntax ${\tt t}^\delta$. For simplicity of our conversation, we will demand also that $\FTS$ consists only of program shapes where all type annotations of form $\msf{infer}\ n$ are unique, and furthermore all function and formal names are uniqueFormalizing both these demands is cumbersome and not relevant to our discussion..

$\Def$ Let $\require{cancel}\xFT = \left\{\untypable\right\}$ be the singleton set, symbolically marking an program shape as untypable.

We can describe $\infer$ as a function $\require{cancel}\FTS\to\FT\sqcup\xFT$, yielding either a well-typed FT program or a marker that a program shape is untypableWe could have also defined $\infer$ as a partial function.. Let $\iota_\FT$ and $\iota_\xFT$ denote the canonical projections in the codomain of $\infer$.

Per the FT syntax, we see that type annotations need appear only the formal parameters of a function declaration; hence, formals are also the only place inference variables may appear in a program shape. Our conversation will assume that we are always discussing type annotations on formals.

Inferring single variables

As a first step in approaching this problem, we will examine the simple case of resolving a single inference variable in an otherwise completely-typed program.

$\Def$ Let $x$ be a formal with a inference variable annotation $\msf{infer}\ n$ in a program shape $\PS\in\FTS$. Then $x^?$ denotes the inference variable $\msf{infer}\ n$.

Since we are supposing that all formals and inference variables in program shapes are unique, $x^?$ is unique for the unique variable $x$.

$\Def$ Let $\infvars(\PS\in\FTS)$ denote the set of inference variables $\left\{x_1^?, \ldots, x_n^?\right\}$ attached to all formals $\left\{x_1,\ldots,x_n \right\}$ in $\PS$.

Now, we will describe an algorithm $\infer1\co (x\to\FTS)\to\FTS\sqcup\xFT$ that resolves a program shape $\FTS$ and an inference variable $x^?$ in it to a shape wherein $x^?$ is replaced with the minimal type among all types, if any, satisfying a set of constraints placed on the type of $x$. We recover these constraints from definitions and uses of $x$:

- A definition $x\leftarrow y$ demands $\typeof\ x \supt \typeof\ y$

- A use $y\leftarrow x$ demands $\typeof\ x \subt \typeof\ y$

Bounds

Definition/use constraints on a variable bound that variable's type, a notion we formalize in two definitions below.

$\text{Definition}(\LB).$ Define $\LB(x, \PS) := \left\{ \typeof\ s_1, \ldots, \typeof\ s_n \right\} \setminus \left\{\typeof\ x\right\}$ to be the set of lower bounds on $\typeof\ x$, recovered from all definitions $x\leftarrow s_1, \ldots, x\leftarrow s_n \in \PS$.

$\text{Definition}(\UB).$ Define $\UB(x, \PS) := \left\{ \typeof\ t_1, \ldots, \typeof\ t_n \right\} \setminus \left\{\typeof\ x\right\}$ to be the set of upper bounds on $\typeof\ x$, recovered from all uses $t_1\leftarrow x, \ldots, t_n\leftarrow x \in \PS$.

Solution sets

Upper and lower boundaries in the universe of types form a (possibly empty) region of types assignable to a constrained variable. We call this region the solution set of an inference variable.

$\Def$ $x^?$ is solvable in $\PS\in\FTS$ if $x^?\in\infvars(\PS)$ and $\LB(x, \PS)$, $\UB(x, \PS)$ both contain no inference variables.

When $x^?$ is solvable in $\PS$, $\LB(x,\PS)$ and $\UB(x,\PS)$ yield a set of proper types $\T$, for which $\st$ is well-defined.

$\Def$ When $x^?$ is solvable in $\PS$, define

to be the solution set of $x^?$.

$\text{Proposition 1}.$ Let $x_1^?$ be solvable in $\PS$. Then

-

If there exists a set of types $\T_1,\ldots,\T_n$ such that $[x_1^?\to\T_1,\ldots,x_n^?\to\T_n]\PS$ yields a well-typed program in $\FT$, $\forall\U\in\Sol(x_1,\PS)\quad [x_1^?\to\U,x_2^?\to\T_2,\ldots,x_n^?\to\T_n]\PS\in\FT$.

-

If $\Sol(x_1, \PS)=\emptyset$, $\nexists\T_1,\ldots,\T_n$ such that $[x_1^?\to\T_1,\ldots,x_n^?\to\T_n]\PS\in\FT$.

$\Pf$ The key is that $\T_1$ does not depend on $\T_2,\ldots,\T_n$.

-

The only typing rules the formal $x_1\co \T_1$ is involved with are subtyping checks at definitions and uses. At a definition $x_1\leftarrow y$ we demand $\T_1\supt \typeof\ y$; if $y=x_1$ this holds by reflexivity of $\st$, otherwise $\T_1\supt\typeof\ y$ for all $x_1\leftarrow y$ where $x\ne y$ implies $\forall\S\in\LB(x,P);\T_1\supt\S$. The argument for uses similarly demands $\forall\U\in\UB(x_1,P);\T_1\subt\U$. If there exist $\T_1,\ldots,\T_n$ such that $[x_1^?\to \T_1,\ldots,x_n^?\to\T_n]\PS\in\FT$, $\T_1$ need only satisfy the above conditions; all types in $\Sol(x, \PS)$ satisfy these conditions.

-

By 1, if there exist $\T_1,\ldots,\T_n$ such that $[x_1^?\to\T_1,\ldots,x_n^?\to\T_n]\PS\in\FT$, $\T_1\in\Sol(x_1,\PS)$.

Existence and construction of $\infer1$

Proposition 1 tells us that finding the solution set for a variable completely tells us whether that variable is typable, and gives us valid typings for it in the case that it is. We can now formally define what it means to infer the type of a variable, give conditions on the existence of an inferred type, and give a precise formula for inferring one variable in a program.

$\text{Definition}(\Minimal).$ When $x^?$ is solvable in $\PS$, define

to be the minimal inferred type of $x$ in $\PS$, when it exists.

$\text{Proposition 2}.$ When $\Sol(x, \PS)$ is non-empty, $\Minimal(x,\PS)$ exists and is given by

$\Pf$ First of all, the equality is correct as $\never$ is uninhabited. The case when $\LB(x,\PS)=\emptyset$ is trivial. For the other, let $\T\in\Sol(x,\PS)$. Then $\forall \S\in\LB(x,\PS) ;\T\supt\S$, so $\T\supt\bigvee\LB(x,\PS)$. Hence

both hold, so $\bigvee\LB(x,\PS)\in\Sol(x,\PS)$ and is its infimum under $\st$.

$\text{Corollary 1}.$ $\Minimal(x, \PS)$ exists iff $\never\vee\bigvee\LB(x,P)\st\bigwedge\UB(x,P)$.

$\text{Corollary 2}.$ When it exists, $\Minimal(x, \PS) = \never\vee\bigvee\LB(x,\PS)$.

$\text{Definition}(\infer1).$ When $x^?$ is solvable in $\PS$, define $\T_x = \never\vee\bigvee\LB(x,\PS)$ and

When $\infer1(x, \PS) = \iota_\FTS P^{\delta+x}$, we say that $P^{\delta+x}$ is $\PS$ inferred with $x\co \T_x$.

$\text{Lemma 1(Properties of $\infer1$)}.$ Let $x_1^?$ be solvable in $\PS$. Then

-

If $\exists \T_1, \ldots, \T_n$ such that $[x_1^?\to\T_1,\ldots,x_n^?\to\T_n]\PS\in\FT$, then $\infer1(x_1,\PS) = \iota_\FTS P^{\delta+x}$ and

- $[x_2^?\to\T_2,\ldots,x_n^?\to\T_n]P^{\delta+x}\in\FT$

- Taking $P^{\delta+x}$ as $\PS$ inferred with $x\co \T_x$, $\T_x\st\T_1$ for all satisfying sequences $\T_1,\ldots,\T_n$.

-

$\nexists \T_1, \ldots, \T_n$ such that $[x_1^?\to\T_1,\ldots,x_n^?\to\T_n]\PS\in\FT$, iff $\infer1(x_1,\PS) = \iota_\xFT\untypable$.

$\Pf$ By application of Proposition 1, 2, and Corollaries 1, 2.

Dealing with cycles

At this point, it may seem that $\infer1$ gives us a constructive, sound, and complete algorithm for inferring the minimal type of an inference variable in a program shape. Indeed, we may attempt write a complete $\infer$ algorithm the following way:

$\text{Definition($\infer$: No Cycles)}$

- $\text{Algorithm $\infer$($\PS$):}$

- $\qquad\text{if }\PS\in\FT$

- $\qquad\text{then return }\iota_\FT\PS$

- $\qquad\text{else}$

-

- $\qquad\qquad\text{if }ivars=\emptyset$

- $\qquad\qquad\text{then return }\iota_\xFT\untypable$

- $\qquad\qquad\text{else}$

- $\qquad\qquad\qquad x\leftarrow x\in ivars$

- $\qquad\qquad\qquad\text{if }\infer1(x,\PS) = \iota_\FTS(P^{\delta+x})$

- $\qquad\qquad\qquad\text{then }\text{Solve}(P^{\delta+x})$

- $\qquad\qquad\qquad\text{else return }\iota_\xFT\untypable$

The issue with this algorithm lies on lines 5 and 6: if we have a program shape with inference variables, none of which are solvable, we cannot progress. This means that while $\infer1$ is complete for solvable variables, this definition of $\infer$ is not complete for program shapes!

As an example of a program shape for which the definition above is incomplete, consider

we have that $\LB(x, \PS) = \left\{y^?,\int\right\}$ and $\LB(y, \PS) = \left\{x^?\right\}$, so no variable is solvable. But $[x^?\to\int, y^?\to\int]\PS$ yields the following well-typed program

Throughout the rest of this section, we describe how to resolve inference variables in the presence of cycles, and prove that our updated method is complete. Our roadmap will be to

- partition inference variables into strongly connected components

- prove that all inference variables in a strongly connected component have a single, unique minimal solution, if any

- show that potential solutions and verification of solutions can be done in two passes

- wrap everything up in a sound, complete, and minimal $\infer$ algorithm

Solution orders

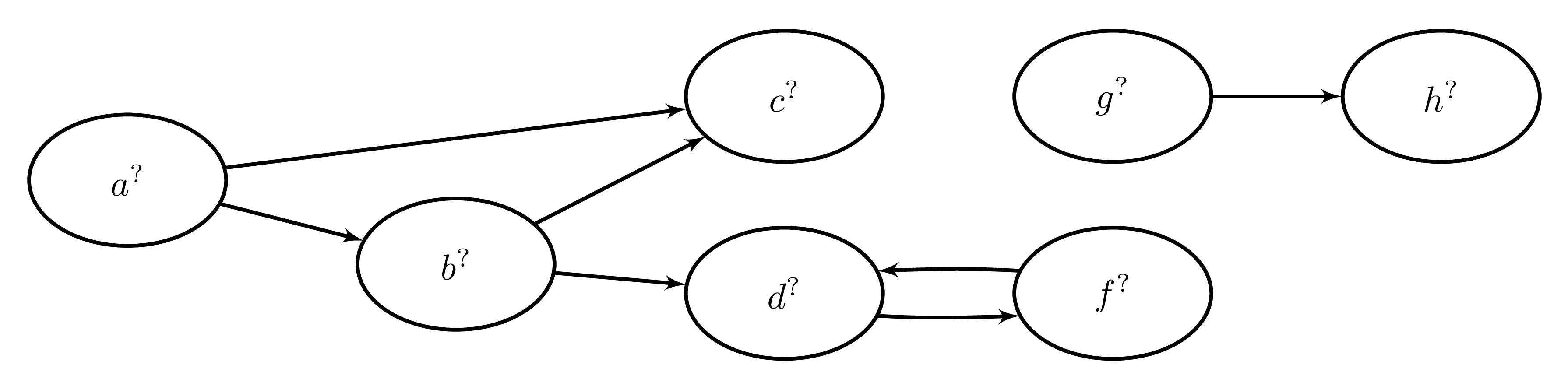

$\Def$ Let $\PS\in\FTS$. The inference dependency graph $\IDG(\PS)$ of $\PS$ is a directed graph where

-

the vertices are the inference variables $\infvars(\PS)$

-

there is an edge $x^?\to y^?$ exactly when $x^?\in\LB(y, \PS)$

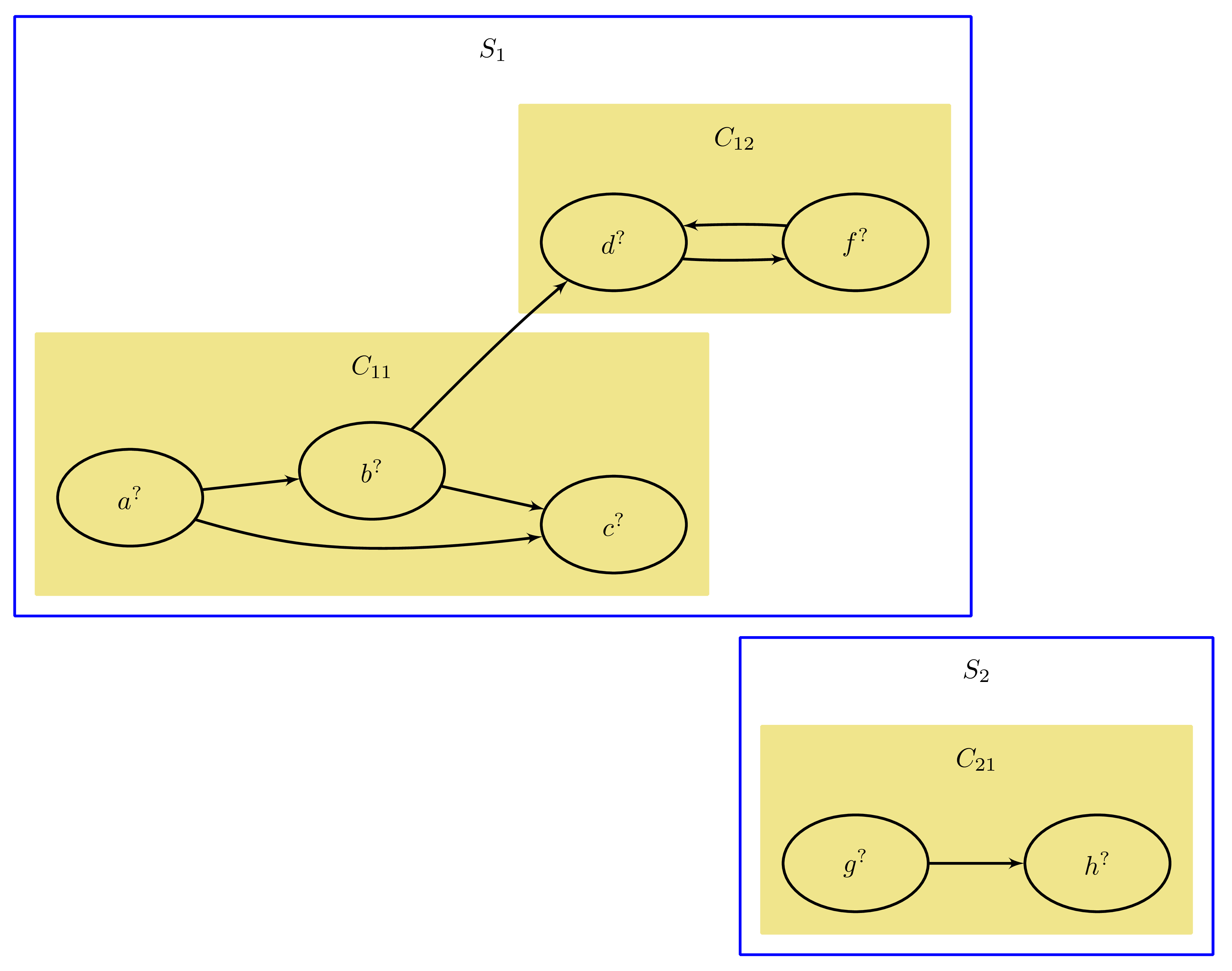

$\text{Definition($\SolOrder$)}.$ Let $\PS\in\FTS$. Let $S_1, \ldots, S_n$ denote the connected components of $\IDG(\PS)$, ordered arbitrarily. Let $C_{i1}, \ldots, C_{in_i}$ denote the strongly connected components of $\S_i$, ordered topologically under condensation.

Then the solution order $\SolOrder(\PS)$ is the strict preorder given by $C_{11} \lt \ldots \lt C_{1n_1}\ldots \lt C_{n1}\ldots \lt C_{nn_n}$.

$\text{Proposition 3}.$ For a given strongly connected component $C_{ij} \in \SolOrder(\PS)$, for any vertex $v\in C_{ij}$, there exists an edge $u\to v$ only if $u\in C_{i1} \lor \ldots \lor u\in C_{ij}$.

$\Pf$ The strongly connected components $C_{11},\ldots, C_{(i-1)n_{i-1}}, C_{(i+1)n_{i+1}},\ldots, C_{n n_n}$ cannot contain any vertices that have an edge to $v$ because they come from disjoint connected components in $\IDG(\PS)$.

For the other components, suppose by way of contradiction there is an edge $u\to v$ where $u\in C_{ik}, k > j$. Then since the condensation of $S_i$ is given by the graph $C_{i1}\to\ldots\to C_{ij}\to\ldots\to C_{ik}\to\ldots\to C_{in_i}$, there is a cycle $C_{ij}\to\ldots\to C_{ik}\to C_{ij}$. But then $C_{ij},\ldots,C_{ik}$ are not maximal, a contradiction.

For example, suppose we have a program shape with the following inference dependency graph:

then its connected components and their condensations are given by:

yielding the solution order $C_{11}\lt C_{12}\lt C_{21}$.

Solving for minimal types

$\Def$ Let $\TS$ be a type shape. The disjunctive normal form $\DNF(\TS)$ of $\TS$ is a type shape of form $\displaystyle\bigvee_i\bigwedge_j {\TS}^*_{i, j}$, where

and $\TS$ and $\DNF(\TS)$ are equivalent under $\st$.

An algorithmic account of $\DNF$ and proof of the properties we claim is given in Pearce's paper (Definition 6, Lemmas 4-6).

$\Def$ Let $\SolOrder(\PS) = C_{1}\lt\ldots\lt C_n$. Then $C_1$ is the first-solvable component of $\PS$.

$\text{Definition($\Reify$).}$ Let $C$ be the first-solvable component of $\PS$ and $x^?\in \vert(C)$. Then $\Reify$ is a function defined as

- $\text{Algorithm $\Reify$($x$, $\PS$):}$

- $\quad \text{Algorithm $\Step$($\TS$, $seen$): match $\DNF(\TS)$ with}$

- $\quad\quad \ldots\land\TS_i\land y^?\land\TS_j,\ldots \text{ if } y\notin seen\Ra \Step(\ldots\land\TS_i\land(\bigvee\LB(y, \PS))\land\TS_j\land\ldots,\;seen\cup\{y\})$

- $\quad\quad \ldots\land\TS_i\land y^?\land\TS_j,\ldots \text{ if } y\in seen\Ra \Step(\ldots\land\TS_i\land\TS_j\land\ldots,\;seen)$

- $\quad\quad \ldots\land\TS_i\land\ldots \text{ if } y^?\subset\TS_i\Ra\Step(\ldots\land[y^?\to\never]\TS_i\land\ldots,\;seen)$

- $\quad\quad \ldots\lor\TS_i\lor y^?\lor\TS_j,\ldots \text{ if } y\notin seen\Ra \Step(\ldots\lor\TS_i\lor(\bigvee\LB(y, \PS))\lor\TS_j\lor\ldots,\;seen\cup\{y\})$

- $\quad\quad \ldots\lor\TS_i\lor y^?\lor\TS_j,\ldots \text{ if } y\in seen\Ra \Step(\ldots\lor\TS_i\lor\TS_j\lor\ldots,\;seen)$

- $\quad\quad \ldots\lor\TS_i\lor\ldots \text{ if } \TS_i \supseteq \TS_j = (\US_1,\ldots,\US_n) \text{ and } y^?\subset\TS_j$

- $\quad\quad\quad\quad \Ra\Step(\ldots\lor[y^?\to\never]\TS_i\lor\ldots,\;seen)$

- $\quad\quad \ldots\lor\TS_i\lor\ldots \text{ if } y^?\subset\TS_i\Ra\Step(\ldots\lor[y^?\to\any]\TS_i\lor\ldots,\;seen)$

- $\quad\quad \text{else }\Ra\TS$

- $\quad \Step(\bigvee\LB(x,\PS), \left\{x\right\})$

where lines 3-5 match a intersection type being the singleton variant of the union form yielded by $\DNF$, matching of $y^?$ is variable in $y \in \infvars$. The abuse of notation $y^?\subset\TS$ means that $y^?$ appears in $\TS$, and $y^?\subseteq\TS$ means that $\TS=y^?$ or $y^?\subset\TS$, all under syntactic equality. Lastly, $\ldots\lor\T\lor\ldots$ should be considered a finite type; we omit terminal types for space.

$\text{Proposition 4.}$ $\Reify(x, \PS)$ terminates and yields a proper type for all suitable $x$ and $\PS$, as described in the definition.

$\Pf$ For termination, observe that $\Step(\TS,\ seen)$ recurses so long as there is an inference variable in $\TS$ matched by a rewrite rule, but each rule decreases the number of inference variables in the next iteration's type. The only exceptions are line 7 and 12, but those rules are reached at most once each for a finite number of inference variables. As for yielding a proper type, it is enough to see that all cases in which an inference variable can appear in a DNF-normalized type are covered by the left-hand sides of lines 7, 8, and 11; all other rules are special cases.

$\text{Lemma 2.}$ Let $C$ be the first-solvable component of $\PS$ and $x_1^?\in \vert(C)$. Let $\left\{x_1^?,\ldots,x_n^?\right\}=\infvars(\PS)$. If there exists a set of types $\T_1,\ldots,\T_n$ such that $[x_1^?\to\T_1,\ldots,x_n^?\to\T_n]\PS\in\FT$, then $[x_1^?\to\Reify(x,\PS),\ldots,x_n^?\to\T_n]\PS\in\FT$, and furthermore, $\Reify(x,\PS)\st\T_1$ among all such sets of types $\T_1,\ldots,\T_n$.

$\Pf$ As in Lemma 1 and its preliminaries, the minimal type (when it exists) of an inference variable $x^?$ is the union of its lower bounds. Line 14 of $\Reify$ computes this; it remains only to show that $\Step$ retains the minimality of a type in the FT calculus. Intuitively, the thing to keep in mind is that recursive unions "grow" a type and recursive intersections "shrink" a type. We will prove minimality by case analysis on lines 7-12; lines 3-5 are analogous special cases for when there are no unions in $\TS$.

-

Line 7: Since $y^?$ is a lower bound of $x$, inlining the lower bounds of $y$ does not change the resolved type of $x$.

-

Line 8: Having already inlined the lower bounds of a inference variable, inlining them again in the presence of a union would not modify the total type, as $\T\lor\ldots\lor\T\lor\ldots = \T\lor\ldots$.

-

Line 9: This is the case of a recursive tuple type, for example $x^? = \T_1\lor\ldots\lor\T_i\lor(\ldots,x^?,\ldots)\lor\T_j\lor\ldots\lor\T_n$. Clearly this is a type of infinite depth bounded by $\any$ at position $x$ when constructed positively; this yields the finitary type $\T_x = \T_1\lor\ldots\lor\T_i\lor(\ldots,\any,\ldots)\lor\T_j\lor\ldots\lor\T_n$. We need to show also that there is no bounding finitary type $\W$ at position $x$ consisting of negations ("approaching from the top"). By way of contradiction, suppose there is such a finitary type; since it is bounded above by $\any$, $\W$ is of the form $\any\land\lnot\S_1\land\ldots\land\lnot\S_n$. Either $\T_1\lor\ldots\lor\T_i\lor\T_j\lor\ldots\lor\T_n \supt \any$ or else $\S = \T_1\lor\ldots\lor\T_i\lor\T_j\lor\ldots\lor\T_n \subt \any$. In the former case $\W$ must resolve to $\any$. In the latter case each nested level of the recursive tuple in $x^?$ induces at least one additional negation in $\W$, so $\W$ must be infinite.

-

Line 11: This is like the case on line 9, but covers the remaining cases of recursive intersections and negations. The argument for intersections is similar to that of tuples above, except that a recursive intersection continuously constricts the total type, bounding it to $\never$. This is not the case for intersections that recurse flatly on themselves; for example, $\T = \T\land\int$ is bound by $\int$, not $\never$. However, $\DNF$ normalization of $\PS$ ensures that in the case on line 9, the recursion is not flat. Flat recursion of intersections is handled on line 4.

Recursive negations can be reduced to a special form of intersections, where the above argument then applies. The reduction is due to DeMorgan; $x^? = \lnot x^?\lor\T \implies x^? = \lnot (\lnot x^?\lor\T)\lor\T = (x^?\land\lnot\T)\lor\T$.

-

Line 12: Trivial, does not modify the type.

Thus, we have shown if there is any finitary type representing the minimal type of inference variable, $\Reify$ finds it.

Putting it all together

$\text{Definition($\infer$)}$ $\infer$ is a function $\FTS\to\FT\sqcup\xFT$, defined below.

- $\text{Algorithm Infer($\PS$):}$

- $\qquad P\leftarrow\PS$

- $\qquad \text{for $C$ in $\SolOrder(P)$}:$

- $\qquad\qquad \text{for $x^?$ in $\vert(C)$}:$

- $\qquad\qquad\qquad \T_x\leftarrow\Reify(x, \PS)$

- $\qquad\qquad\qquad P\leftarrow \left([x^?\to\T_x] P\right)$

- $\qquad \text{for $x^?$ in $\infvars(\PS)$}:$

- $\qquad\qquad \T_x\leftarrow \typeof\ x \text{ in } P$

- $\qquad\qquad \U\leftarrow\bigwedge\UB(x, P)$

- $\qquad\qquad \text{if } \T_x\not\lt:\U \text{ then return } \iota_\xFT\untypable$

- $\qquad \text {return } \iota_\FT P$

Lines 3-6 of the algorithm find potential solutions to the inference variables of $\PS$, and lines 7-10 verify those solutions by comparing them to the (now-resolved) upper bounds of corresponding variables. If verification succeeds, a well-typed program in $\FT$ is returned; otherwise we mark the program shape as uninferrable, and hence untypable.

Note that $\infer$ is well-defined; the only thing that may be of concern is the call to $\Reify(x,P)$, as $\Reify$ is only defined $x\in C$ where $C$ is first-solvable in $P$. But since reification is done in linear solution order $C_1,\ldots,C_n$, once all inference variables in $C_1$ are reified in $P$, $C_2$ becomes first-solvable in $P$.

$\text{Lemma 3(Soundness of $\infer$)}.$ If $\infer(\PS) = \iota_\FT P$, it is indeed the case that $P\in\FT$.

$\Pf$ By Lemma 2, lines 3-6 rewrite every inference variable $x^?$ with its minimal legal type $\T_x = \bigvee \LB(x, \PS)$, if one exists. The condition of existence is the same as that in Proposition 1 and Corollary 1; namely, $T_x \st \UB(x, P)$, which is verified for all possible solutions of inference variables on lines 7-10.

$\text{Lemma 4(Completeness of $\infer$)}.$ Let $\PS\in\FTS$ where $\infvars(\PS)=\left\{x_1^?,\ldots,x_n^?\right\}$. If there exists as set of proper types $\T_1,\ldots,\T_n$ such that $[x_1^?\to\T_1,\ldots,x_n^?\to\T_n]\PS \in \FT$, $\infer(\PS) = \iota_\FT P$.

$\Pf$ Corollary of Lemma 2.

$\text{Lemma 5(Minimalism of $\infer$)}.$ Let $\PS\in\FTS$ where $\infvars(\PS)=\left\{x_1^?,\ldots,x_n^?\right\}$ and $\infer(\PS) = \iota_\FT P$. Let $\T_1,\ldots,\T_n$ such that $[x_1^?\to\T_1,\ldots,x_n^?\to\T_n]\PS = P$. Then among all sets of types $\U_1,\ldots,\U_n$ such that $[x_1^?\to\U_1,\ldots,x_n^?\to\U_n]\PS\in\FT$, $\T_1\st\U_1,\ldots,\T_n\st\U_n$.

$\Pf$ Corollary of Lemma 2.

Conclusion

In this cc, we have presented a sound, complete, and minimal algorithm for type inference of mandatory type annotations in the flow-typing type calculus developed by Pearce, 2012. Our algorithm is constructive and syntax-directed, resolving inference variables from types demanded at definition sites and verifying the legality from types demanded at usage sites.

Our approach to type inference is similar to the classic methods (a la Algorithm W) - collect some constraints and perform unification. Our unification step is more appropriately called resolution, and is more involved than classical unification. In our case, the fact that the FT type universe forms a complete lattice is particularly important.

The finitary nature of the FT calculus complicates the resolution process in the presence of type variable cycles, as an inference variable can incur cyclic constraints that result in a type of infinite depth. Our approach resolves such types to their "closest" legal finitary type by translation to disjunctive normal form, a process that Pearce has noted is exponential in the depth of a type in the worst case (Pearce 2012, section 3.2), and then applying a rewrite procedure whose time and space complexities are linear in variables and type depths but whose rewrite rules are somewhat tricky. The addition of (mutually) recursive types to the FT calculus would trivialize the solution to this issue, though of course would come with its own set of challenges (e.g. type simplification and reformulating $\st$ to be sound and complete).

Takeaways

This is a small problem I toyed with for a couple days, and then took far longer to write up as in this cc. I think it is rather interesting, because type inference in the presence of subtyping is generally considered difficult (though that is usually because of record types and such, not present in FT), and because the FT calculus does not make resolution of certain issues trivial (e.g. cycles cannot be resolved as recursive types).

Designing a compiler with a type inferer out-of-band of the typechecker, as we did here, is also interesting. While the inferer will need to depend a lot on the checker, this decoupling means that multiple inferers for different purposes could be designed. Some ideas include:

- A sound, complete, and minimal inferer, like the one presented here

- An inferer that optimizes for readability of types, avoiding deep nesting

- An inferer that optimizes for a minimal number of unique types

- An inferer that solves for maximal types (principal types in functional languages)